Organizations face a critical challenge when working with Large Language Models: finding the right model and crafting the perfect prompt for each specific use case. With multiple providers offering various models - each with its own strengths and quirks - the process of discovering the optimal combination can be both daunting and time-consuming.

The Challenge of LLM Selection and Prompt Optimization

Every LLM behaves differently. GPT-4 excels at nuanced reasoning. Claude handles long-context tasks with precision. Mistral delivers speed for structured outputs. But knowing which model fits your use case - and how to prompt it effectively - requires systematic testing, not guesswork.

“The difference between a good prompt and a great one can mean a 40% improvement in output quality - but finding that difference manually across multiple models is nearly impossible.

The real cost: Teams often spend weeks manually testing model and prompt combinations, only to discover they have been optimizing for the wrong variables. Without a structured approach, prompt engineering becomes trial and error at scale.

Introducing Strongly.AI's Prompt Comparison Feature

At Strongly.AI, we understand the importance of using the full potential of LLMs while maintaining efficiency in your workflows. That is why we developed our Prompt Comparison feature - designed to streamline the entire process of model selection and prompt optimization into a single, visual workflow.

Prompt

Models

Comparison

Results

Optimal

Key Capabilities

Multi-Provider Selection

Select from multiple LLM providers and models in a single comparison run. Test across OpenAI, Anthropic, Mistral, and more simultaneously.

Fine-Grained Parameters

Configure temperature, top-p, max tokens, and other available parameters independently for each model in your comparison.

Custom Prompt Stacks

Set custom system prompts, assistant prompts, and user prompts. Test how different prompt strategies affect output quality.

Side-by-Side Evaluation

Compare responses visually in a side-by-side view, making it easy to evaluate tone, accuracy, and completeness across models.

Why Use Prompt Comparison?

Our Prompt Comparison feature delivers measurable advantages across the entire LLM development lifecycle:

Optimize Model Selection

Quickly identify which LLM performs best for your specific use case, ensuring you always use the most effective AI technology available.

Refine Prompts Systematically

Experiment with different system and assistant prompts to see how they affect output across various models, then craft the perfect prompt for your needs.

Save Time and Resources

Instead of manually testing each model and prompt combination, compare multiple options simultaneously - reducing optimization time from weeks to minutes.

Ensure Consistency

Test the same user prompt across different models to ensure consistent performance and output quality regardless of the underlying LLM.

Adapt to Changing Needs

As requirements evolve or new models become available, easily re-evaluate your choices to maintain peak performance over time.

Prompt Comparison transforms model selection from guesswork into a data-driven process. By testing multiple configurations simultaneously, teams can make informed decisions in minutes rather than weeks.

Real-World Use Cases

The Prompt Comparison feature is invaluable across a wide range of enterprise scenarios:

Content Creation

Compare how different models generate marketing copy, product descriptions, or creative writing. Find the voice that best matches your brand.

Customer Support

Test response quality and tone for customer inquiries across multiple models to ensure the best possible user experience.

Data Analysis

Evaluate how different models interpret and summarize complex datasets, generate insights, and handle structured data outputs.

Code Generation

Compare code output quality, accuracy, and best-practice adherence across various programming tasks and languages.

Pro tip: Language translation is another powerful use case. Assess the nuance and accuracy of translations for different language pairs across models to find the best fit for your audience.

Direct Integration with Your Workflow

We designed Prompt Comparison with flexibility and ease of use in mind. Once you have found the perfect combination of model, parameters, and prompts, the real power begins.

Save and Reuse: Optimized configurations can be saved and instantly accessed in the StronglyGPT UI or via our REST API - ensuring consistency and efficiency across all your AI-powered workflows.

Getting Started with Prompt Comparison

Ready to optimize your LLM interactions? Here is how to get started in six straightforward steps:

1. Log In and Navigate

Sign in to your Strongly.AI account and navigate to the Prompt Comparison feature from the main dashboard.

2. Select Models

Choose the LLM providers and models you want to compare. Mix and match across providers for the broadest evaluation.

3. Configure Parameters

Set the desired temperature, top-p, max tokens, and other parameters for each model independently.

4. Craft Your Prompts

Enter your system prompt, assistant prompt, and the user prompt you want to test across all selected models.

5. Run and Review

Execute the comparison and review the results side-by-side. Evaluate each response for tone, accuracy, and completeness.

6. Iterate and Save

Refine as needed, then save your optimal configuration for reuse in StronglyGPT or via the REST API.

Prompt Comparison in Action: A Real-World Example

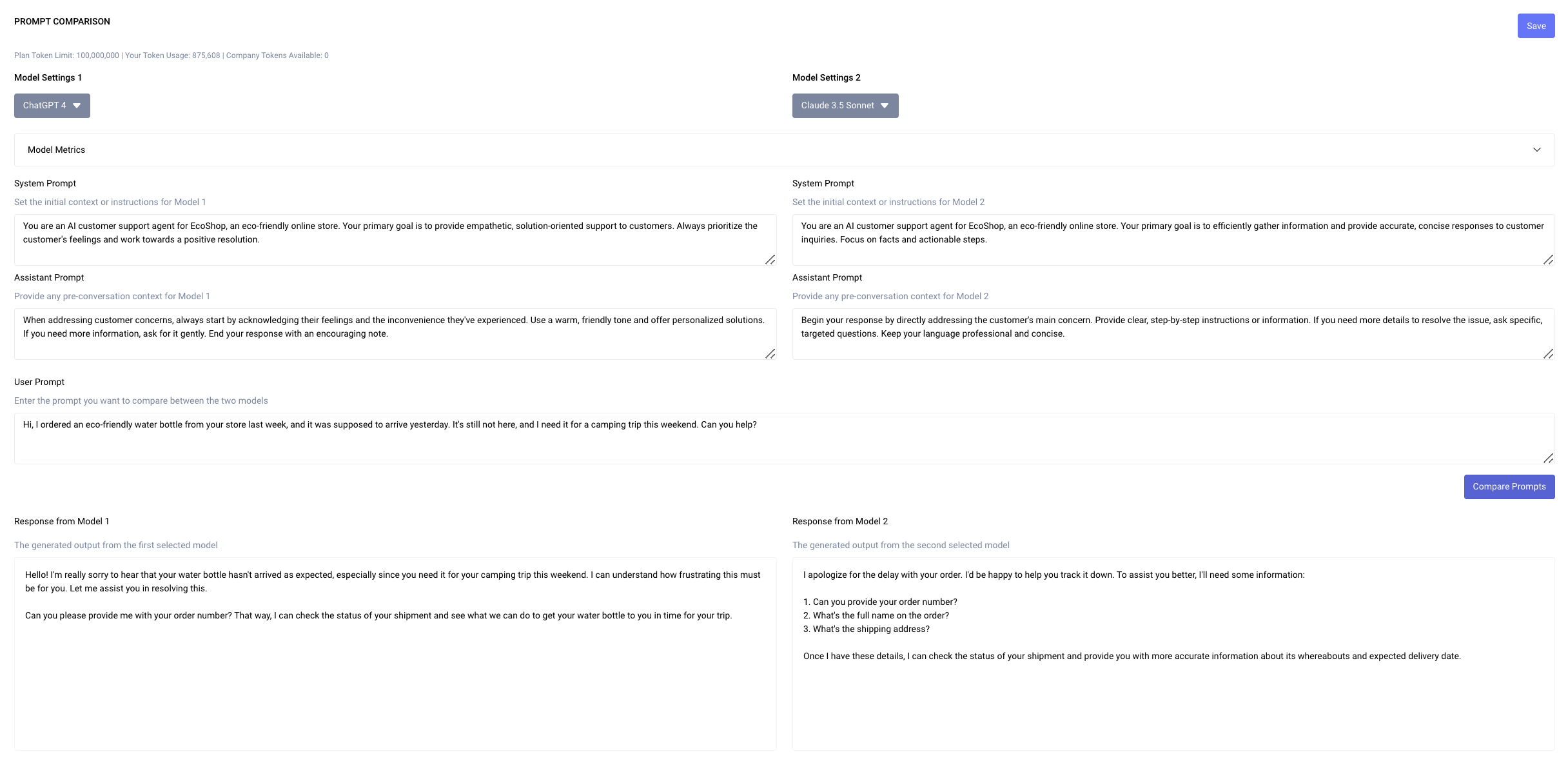

To illustrate the power of Prompt Comparison, let us walk through a practical customer support scenario. We will compare how different system and assistant prompts affect the response to a customer inquiry about a late delivery.

Scenario: Customer Support for Late Delivery

We will use two approaches - one focused on empathy and problem-solving, the other on efficiency and information gathering - across two leading models.

Models Selected

- GPT-4 - paired with the empathy-focused prompt strategy

- Claude 3.5 - paired with the efficiency-focused prompt strategy

System Prompts

System Prompt 1

Warm, solution-oriented approach

You are an AI customer support agent for EcoShop, an eco-friendly online store. Your primary goal is to provide empathetic, solution-oriented support to customers. Always prioritize the customer's feelings and work towards a positive resolution.

System Prompt 2

Fast, fact-driven approach

You are an AI customer support agent for EcoShop, an eco-friendly online store. Your primary goal is to efficiently gather information and provide accurate, concise responses to customer inquiries. Focus on facts and actionable steps.

Assistant Prompts

Assistant Prompt 1

When addressing customer concerns, always start by acknowledging their feelings and the inconvenience they have experienced. Use a warm, friendly tone and offer personalized solutions. If you need more information, ask for it gently. End your response with an encouraging note.

Assistant Prompt 2

Begin your response by directly addressing the customer's main concern. Provide clear, step-by-step instructions or information. If you need more details to resolve the issue, ask specific, targeted questions. Keep your language professional and concise.

User Prompt

Comparison of Responses

Empathy-Focused Response

System Prompt 1 + Assistant Prompt 1

Hello! I'm really sorry to hear that your water bottle hasn't arrived as expected, especially since you need it for your camping trip this weekend. I can understand how frustrating this must be for you. Let me assist you in resolving this.

Can you please provide me with your order number?

That way, I can check the status of your shipment and see what we can do to get your water bottle to you in time for your trip.

Efficiency-Focused Response

System Prompt 2 + Assistant Prompt 2

I apologize for the delay with your order. I'd be happy to help you track it down. To assist you better, I'll need some information:

- Can you provide your order number?

- What's the full name on the order?

- What's the shipping address?

Once I have these details, I can check the status of your shipment and provide you with more accurate information about its whereabouts and expected delivery date.

Analysis

The different combinations of system and assistant prompts led to notably different responses:

- GPT-4 with the empathy-focused prompts produced a longer, more personalized response that emphasizes emotional support. It acknowledges the customer's feelings multiple times and offers reassurance before gathering information.

- Claude 3.5 with the efficiency-focused prompts delivered a more concise, action-oriented response. It immediately focuses on gathering specific information to resolve the issue and provides clear next steps.

Both responses address the core issue, but in distinctly different styles - each suited to different customer personas and brand voices. This is the power of systematic prompt comparison.

“This comparison demonstrates the importance of carefully crafting system and assistant prompts to align with your brand voice and customer service goals.

Conclusion: Enabling AI-Driven Results

Strongly.AI's Prompt Comparison feature is more than a tool - it is a gateway to accessing the full potential of LLMs in your organization. By providing a systematic, efficient way to optimize your AI interactions, we enable you to push the boundaries of what is possible with AI.

Whether you are fine-tuning customer interactions, streamlining content creation, or developing advanced AI applications, our Prompt Comparison feature ensures you always use the best LLM and prompt combination for your unique needs.

Stop guessing which model and prompt combination works best. With Prompt Comparison, you can test, measure, and optimize your AI interactions in a single workflow - turning weeks of trial-and-error into minutes of data-driven decisions.

Ready to Maximize Your LLM Performance?

See how Strongly.AI's Prompt Comparison feature can improve your AI-powered workflows.

Scope the First Engagement