Large Language Models have transformed the landscape of AI applications, but their effectiveness depends heavily on one thing - the quality of the prompts they receive. A brilliantly engineered prompt can extract expert-level reasoning from an LLM, while a poorly constructed one produces vague, unusable output. The difference between the two is prompt engineering, and it has become a discipline unto itself.

Strongly.AI has developed a groundbreaking agent system that leverages customizable agent chains to optimize prompts, ensuring maximum performance and efficiency in every LLM interaction.

The Strongly.AI Approach to Prompt Optimization

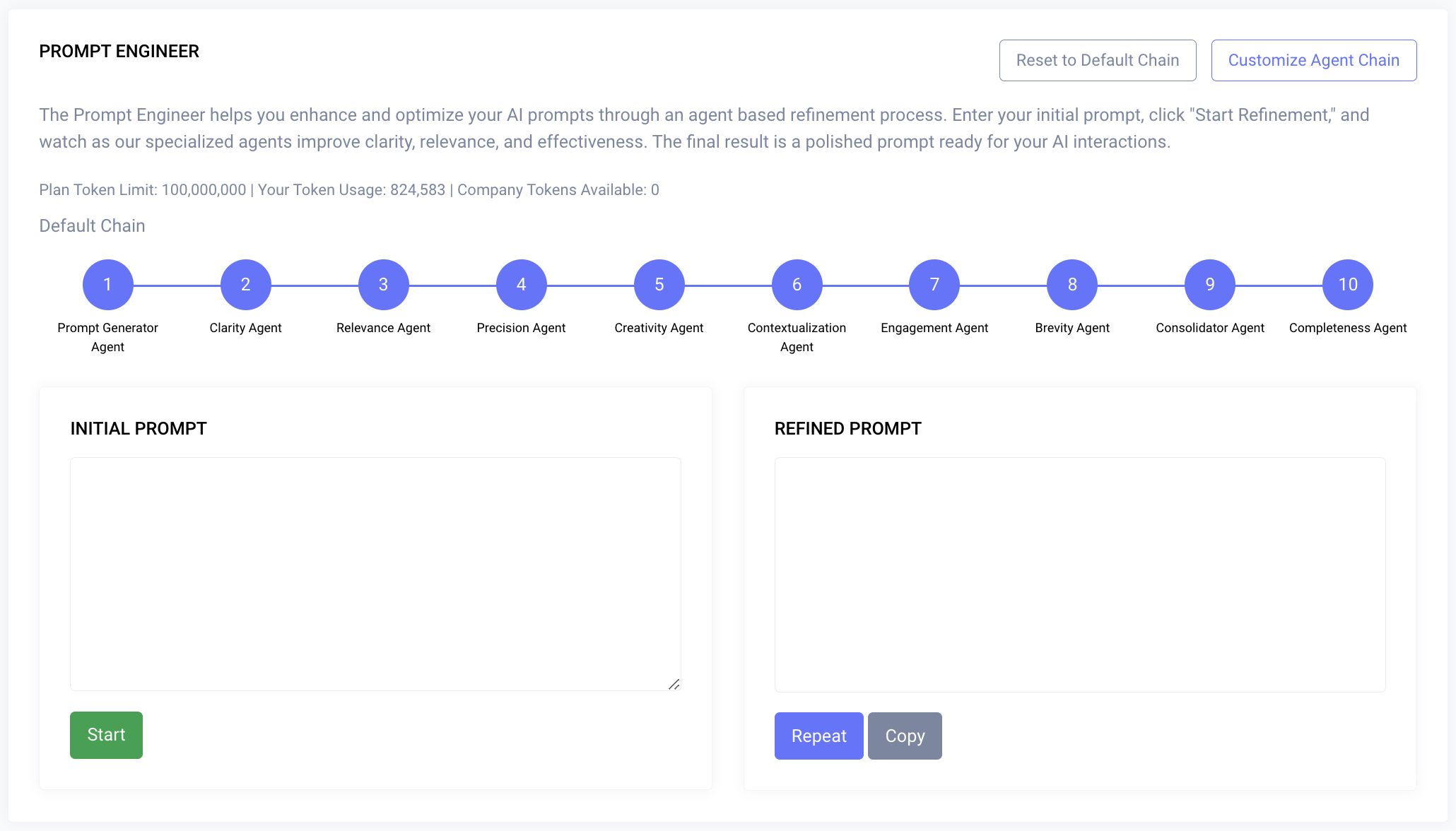

Strongly.AI's approach utilizes a chain of specialized agents, each designed to refine a specific aspect of a prompt. This multi-stage optimization process ensures that the final prompt is not only effective but also tailored to the unique requirements of each task.

Rather than relying on a single pass of manual editing, each agent in the chain applies focused intelligence to one dimension of prompt quality - from clarity to relevance to creative diversity. The result is a prompt that has been systematically optimized across every dimension that matters.

Users have the flexibility to create custom agents and chains, allowing for unprecedented adaptability to specific use cases and industries. No two prompting challenges are the same - and your optimization pipeline shouldn't be either.

“The difference between a good prompt and a great prompt is not a single insight - it's a systematic process of refinement across every dimension that impacts output quality.

The Prompt Optimization Agent Chain

At the core of Strongly.AI's approach is a sequential pipeline where your raw prompt flows through specialized agents, each contributing a targeted refinement before passing it forward.

Strongly.AI provides a set of pre-configured agents, each targeting a distinct quality dimension. Together, they form a comprehensive optimization pipeline.

Clarity Agent

Enhances the prompt's clarity, eliminating ambiguities and ensuring the language is straightforward and unambiguous.

Relevance Agent

Analyzes the prompt to ensure it is directly relevant to the intended task or query, removing tangential elements.

Precision Agent

Refines the prompt to elicit precise and accurate responses from the LLM, adding specificity where needed.

Creativity Agent

Introduces elements that encourage more creative and diverse outputs, expanding the solution space.

Engagement Agent

Optimizes the prompt to maintain the LLM's engagement and focus throughout extended interactions.

Brevity Agent

Condenses the prompt to its most essential elements, removing redundancy while preserving intent.

Consolidator Agent

Integrates refinements from all previous agents, ensuring cohesion and resolving any conflicts between optimizations.

Completeness Agent

Performs a final verification to ensure comprehensive coverage and that no critical aspects have been lost in optimization.

Custom Agent Creation

Beyond the pre-configured chain, users can create their own specialized agents to address unique requirements. This extensibility is what separates Strongly.AI from static prompt templates - your optimization pipeline evolves with your needs.

Domain-Specific Agents

Tailored for particular industries or fields - healthcare, finance, engineering, or any specialized domain with unique vocabulary and requirements.

Style Agents

Optimize prompts for specific writing styles, tone, or brand voices. Ensure consistency across all LLM-generated content.

Localization Agents

Adapt prompts for different cultural contexts and languages, ensuring outputs resonate with target audiences worldwide.

Compliance Agents

Ensure adherence to regulatory requirements or company policies. Critical for enterprises in regulated industries.

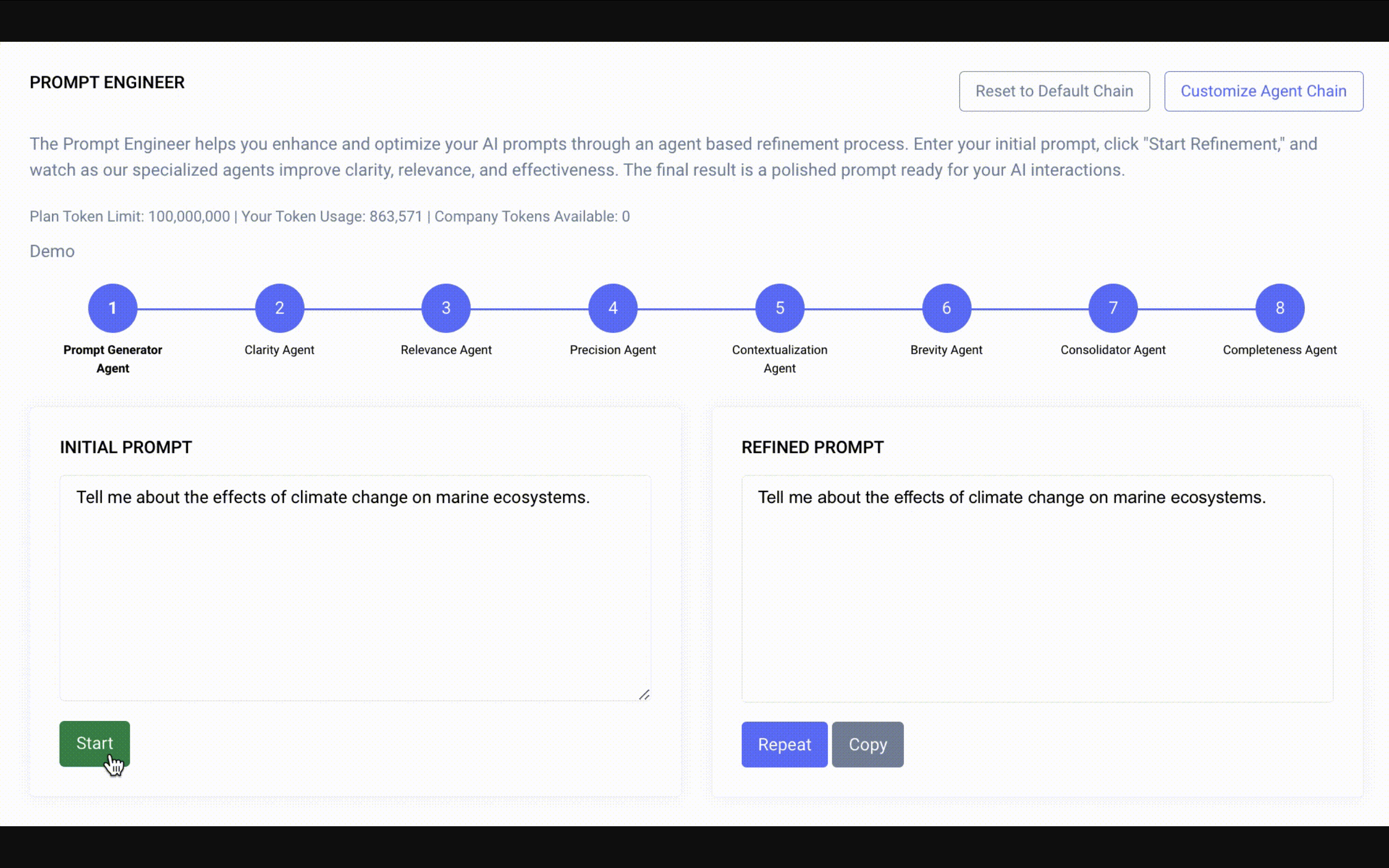

The Prompt Optimization Process

The optimization pipeline follows a structured, repeatable process that transforms raw prompts into precision-engineered instructions. Each stage builds upon the previous one, creating a compound improvement effect.

Initial Prompt Input

The user provides a raw prompt for optimization - it can be a rough idea, a partial instruction, or a detailed but unrefined query.

Agent Chain Configuration

Select from pre-configured agents or assemble a custom chain tailored to your use case. Order and composition matter.

Sequential Agent Processing

The prompt passes through each agent in the configured sequence. Each agent applies its specialized refinement and passes the improved version forward.

Iterative Refinement

Additional passes may be initiated for further refinement. The system learns from each iteration, applying progressively deeper optimizations.

Final Verification

The Completeness Agent ensures the optimized prompt meets all required criteria and that no critical context has been lost during optimization.

Output

The fully optimized prompt is delivered - ready for use with your chosen LLM, complete with version history for comparison.

Technical Advances

Strongly.AI's agent system incorporates several technical advances that set it apart from simple prompt template libraries or manual optimization workflows.

Prompt

Flexible Chain Architecture

Easy integration of custom agents and reconfiguration of sequences. Add, remove, or reorder agents without rebuilding the pipeline.

Inter-Agent Learning

Agents share insights with each other, continuously improving chain performance. Each agent's output informs the next agent's strategy.

Adaptive Optimization Paths

Dynamic adjustments based on input prompt characteristics. The chain automatically adapts its behavior depending on the prompt type and complexity.

Prompt Version Control

Maintains a full history of prompt iterations for comparison and analysis. Understand exactly how each agent modified your prompt.

API Integration

Direct incorporation into existing workflows and applications. Optimize prompts programmatically within your production systems.

Why does this matter? These advances shift prompt optimization from a manual art form into an automated, repeatable engineering process - making it accessible to every team, not just prompt engineering specialists.

Use Case: Optimizing a Research Query Prompt

To understand the power of agent chain optimization, consider this real-world example. A researcher starts with a broad, unfocused prompt and transforms it into a precision-engineered instruction through the agent chain.

"Tell me about the effects of climate change on marine ecosystems."

Analyze climate change effects on marine ecosystems:

- Ocean temperature: increase since 1900, 2100 projection, impact on biodiversity (name 3 species with shifted ranges).

- Ocean acidification: current pH, change rate since industrialization, effect on coral reefs (global decline %, most vulnerable system).

- Sea level rise: annual increase over last decade, impact on coastal habitats (quantify mangrove loss in one region).

- Altered ocean currents: Gulf Stream changes in last 50 years, influence on fish populations (North Atlantic cod stock changes since 1950).

Conclude with two evidence-based predictions for marine food webs and global fisheries by 2050, considering cumulative effects and potential tipping points.

The result speaks for itself. The optimized prompt is dramatically more specific, structured, and actionable - transforming a vague question into a precision-engineered instruction that produces consistent, high-quality LLM output every time.

Custom Chain Example: Legal Document Analysis

The real power of Strongly.AI's system emerges when organizations build custom agent chains tailored to their specific domain. Consider how a law firm could create a specialized chain for analyzing legal documents.

Legal Terminology Agent

Ensures correct legal language usage and replaces colloquial terms with precise juridical vocabulary.

Jurisdiction-Specific Agent

Focuses prompts on the relevant laws and regulatory framework for the specific jurisdiction in question.

Case Law Agent

Incorporates references to relevant precedents and instructs the LLM to ground its analysis in established case law.

Client-Specific Agent

Tailors the prompt to the client's particular situation, incorporating relevant facts and circumstances.

Confidentiality Agent

Ensures exclusion of privileged information and sanitizes any data that shouldn't be sent to external LLM services.

Domain expertise matters. A generic prompt optimization tool cannot understand the nuances of legal terminology, jurisdictional requirements, or privilege rules. Custom agents encode this expertise directly into the optimization pipeline - making every prompt legally sound by default.

“Custom agent chains don't just improve prompts - they encode institutional knowledge into your AI workflow. Every interaction benefits from your organization's accumulated expertise.

The Future of Prompt Optimization with Strongly.AI

As LLM technology continues to advance, Strongly.AI is committed to evolving our prompt optimization system. Here is what we are building toward.

Agent Marketplace

A marketplace for sharing and discovering community-created custom agents - browse, rate, and integrate agents built by domain experts.

Advanced Analytics

Deep analytics for understanding and improving custom agent performance - see exactly how each agent impacts output quality.

LLM-Specific Optimization

Integration with specific LLM architectures for tailored optimization - prompts tuned for the exact model you are using.

Real-Time Adjustment

Real-time prompt adjustment based on LLM output analysis - the system learns what works and adapts automatically.

Multi-Modal Optimization

Multi-modal prompt optimization for text-to-image, text-to-video, and other emerging AI models beyond traditional text.

Conclusion

Strongly.AI's customizable Agent System for prompt optimization represents a significant leap forward in maximizing the potential of Large Language Models. By providing a flexible framework that allows users to create and integrate custom agents, we have created a robust, adaptable system that ensures every interaction with an LLM is as effective and efficient as possible.

Whether you are using our pre-configured agents or building a highly specialized chain, Strongly.AI's approach to prompt optimization will play a crucial role in accessing the full capabilities of language models across a wide range of applications and industries.

“The future of prompt engineering isn't about writing better prompts manually - it's about building intelligent systems that optimize prompts automatically, consistently, and at scale.

Ready to Optimize Your Prompts?

See how Strongly.AI's agent chains can optimize every LLM interaction in your organization.

Scope the First Engagement