The Hidden Dangers of Unprotected LLM Usage

Imagine this scenario: your marketing team is using a popular LLM to draft email campaigns. Unknowingly, they paste a customer list into the prompt - names, email addresses, and purchase history included. In an instant, that sensitive data is transmitted to a third-party AI provider, potentially violating data protection regulations and compromising customer trust.

“The most dangerous data leaks aren't the ones you plan for - they're the ones that happen in the space between a copy and a paste.

This is not a far-fetched scenario. Many organizations remain unaware of the risks that come with unfiltered LLM usage. Every interaction with an external model is a potential exposure point.

Without guardrails in place, every prompt sent to an external LLM is an uncontrolled data transfer - one that could trigger regulatory action, erode customer trust, or expose trade secrets.

PII Exposure

Personal data - names, SSNs, emails - can be inadvertently shared in prompts, leading to privacy breaches and regulatory violations.

IP Theft

Proprietary information or trade secrets can be leaked through prompts or extracted from model responses.

Compliance Nightmares

Violations of GDPR, CCPA, or industry-specific regulations can result in hefty fines and legal proceedings.

Reputational Damage

Data breaches erode customer trust and tarnish brand image - damage that can take years to repair.

LLM Guardrails: Your First Line of Defense

LLM guardrails act as a sophisticated filtration system, scrutinizing both the input (prompts) and output (responses) of every AI interaction. They serve as a protective barrier, ensuring that sensitive information never reaches unauthorized parties or external AI systems.

Data

Every prompt passes through multiple concentric layers of protection. Each layer catches a different class of sensitive content - from obvious PII patterns to nuanced topic-level detection - ensuring nothing slips through to the model.

Strongly.AI's Filter Feature: Comprehensive Protection, Uncompromised Performance

At Strongly.AI, we've developed a state-of-the-art filter feature that offers unparalleled protection without sacrificing the power of LLMs. Our approach is both robust and flexible, catering to the unique needs of each organization.

Pre-built PII Filters: Ready-to-Deploy Protection

Our platform comes equipped with pre-built filters designed to catch common PII patterns, including:

Social Security Numbers

Catches SSN patterns in all common formats, redacting them before they reach any external service.

Credit Card Information

Detects and blocks credit card numbers, CVVs, and expiration dates across all major card networks.

Email Addresses & Phone Numbers

Advanced pattern recognition for emails and phone numbers in domestic and international formats.

IDs & Documents

Passport numbers, driver's license IDs, and other government-issued document identifiers.

These filters use advanced pattern recognition techniques to identify and redact sensitive information before it ever reaches the LLM.

Prompt

Guardrail

Model

Guardrail

Response

Redacted

Custom Filter Creation: Tailored Security for Your Business

Every organization has unique security requirements. That's why we offer three powerful methods for creating custom filters:

Pattern Matching

Use the power of regular expressions to create highly specific filters. For example, you could create a filter to catch internal project codes following a particular format - ensuring proprietary identifiers never leave your environment.

Word or Phrase Filtering

Block specific terms or expressions that are sensitive to your organization. This could include product codenames, client names, internal jargon, or any vocabulary unique to your business.

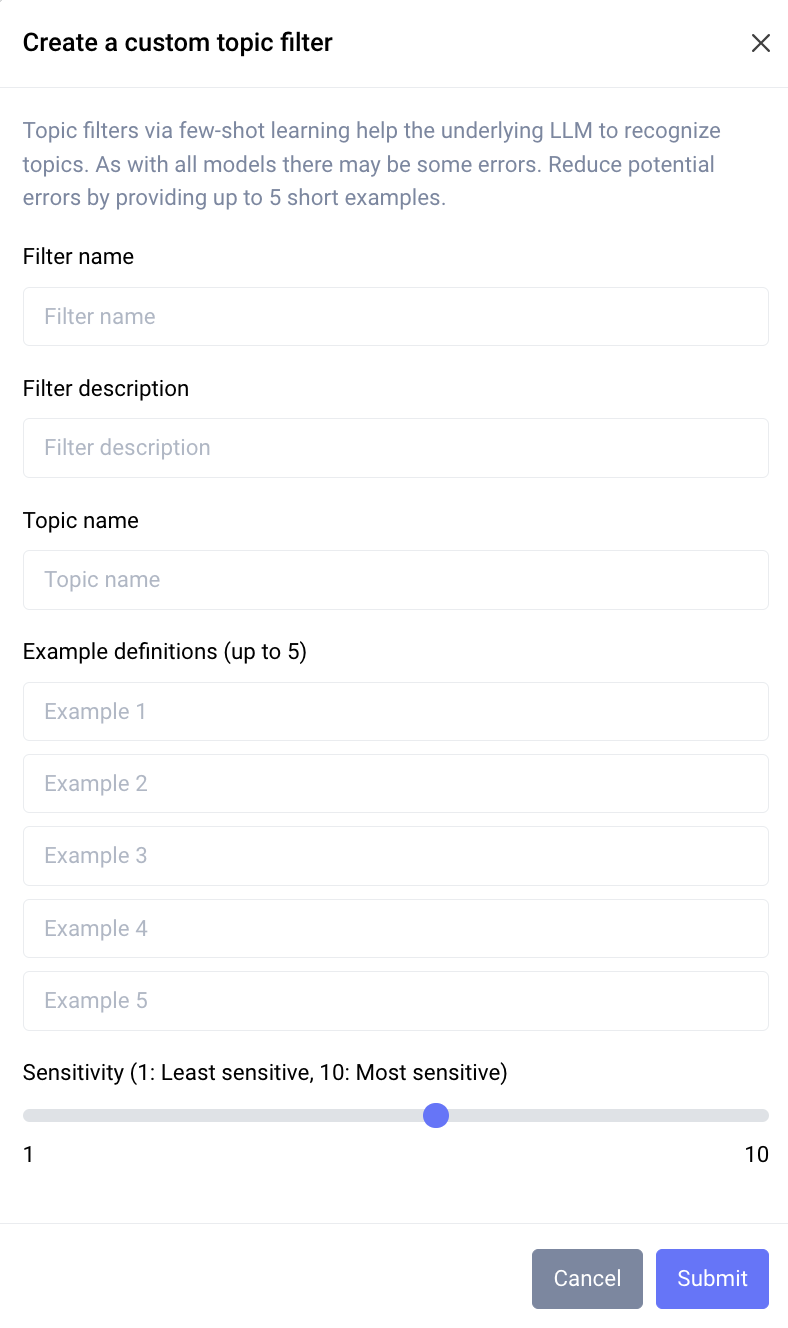

Topic-Based Filtering with Few-Shot Learning

This is where our technology truly shines. Using advanced machine learning techniques, we create filters that understand context and nuance - not just exact matches.

Few-shot learning lets you define what to filter with just 3-5 examples. The system generates thousands of similar patterns internally, creating a robust model that catches content you never explicitly defined - even when phrased differently than your examples.

Deep Dive: Few-Shot Learning for Topic Filtering

Our topic-based filtering uses a few-shot learning approach, allowing the system to understand complex concepts with minimal examples. Here's how it works:

Provide Examples

You provide a small set of examples - typically 3 to 5 - that represent the topic you want to filter.

AI Analysis

Our AI analyzes these examples to understand the underlying patterns, context, and semantic meaning.

Model Generation

The system generates a large number of similar examples internally, creating a robust model of the topic.

Real-Time Scanning

This model scans incoming text in real time, identifying and filtering content that matches the learned topic - even when phrased differently than your original examples.

For instance, if you want to filter discussions about an unreleased product, you might provide a few sentences describing its features. The system will then catch a wide range of related content, even when it doesn't use the exact words from your examples.

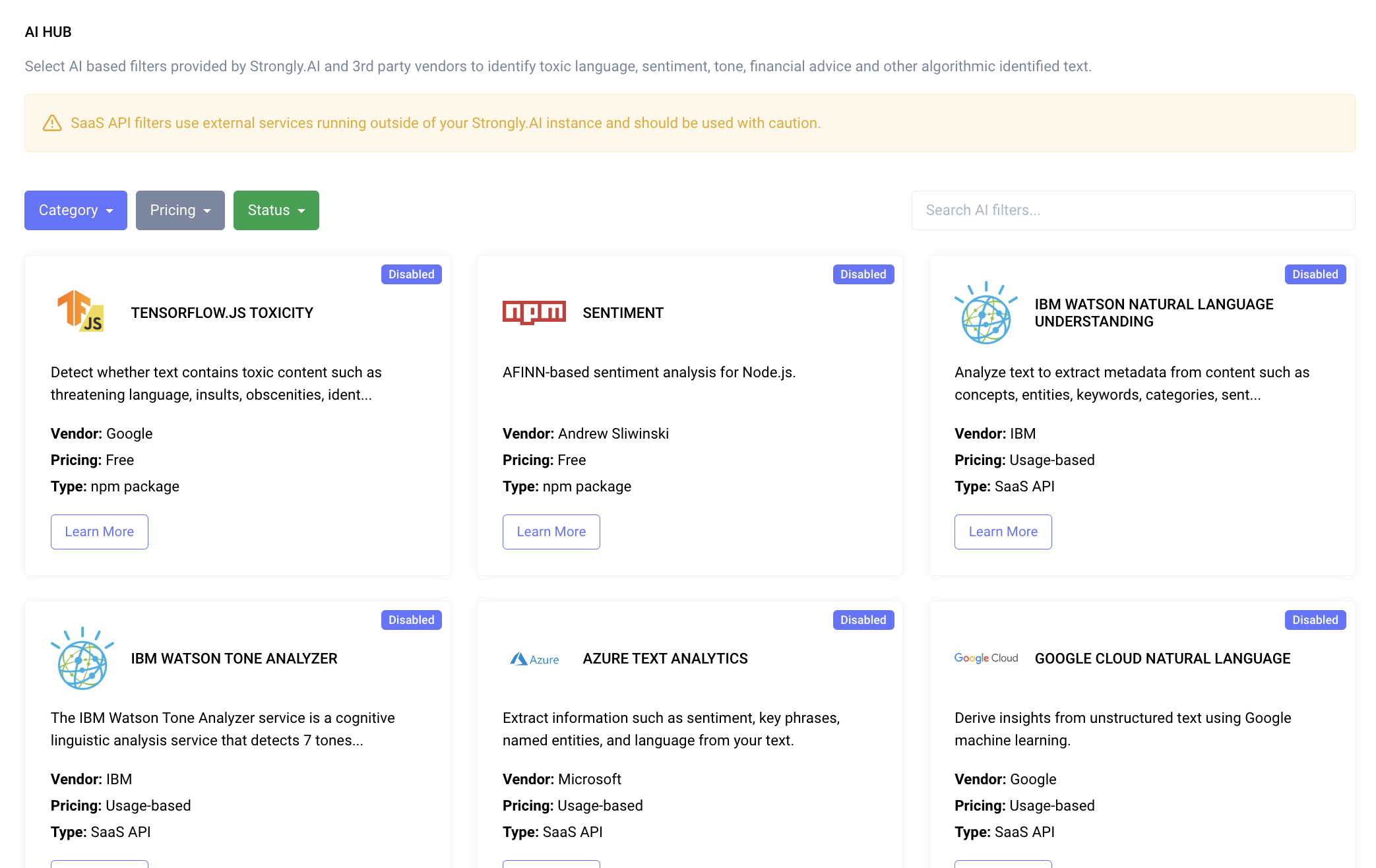

AI Hub: Expanding Your Security Arsenal

For organizations with specialized needs, our AI Hub offers integration with third-party filters. This allows you to use industry-specific security solutions directly within our platform - extending protection beyond what any single vendor can offer.

Granular Control: The Right Protection at Every Level

Security isn't one-size-fits-all. Strongly.AI allows you to apply filters with pinpoint accuracy - ensuring every team, role, and individual gets exactly the protection they need.

This layered approach means your R&D team can have strict IP protection while your marketing team gets broader creative freedom - all managed from a single dashboard with full audit trails.

The Business Case for LLM Guardrails

Implementing robust LLM guardrails isn't just about avoiding risks - it's about enabling your organization to fully use AI capabilities with confidence. Here's why business leaders should prioritize this technology:

Regulatory Compliance

Stay ahead of evolving data protection laws and avoid costly penalties from GDPR, CCPA, and industry-specific regulations.

Competitive Advantage

Safely utilize advanced AI tools while competitors hesitate due to security concerns - move faster with confidence.

Customer Trust

Demonstrate your commitment to data protection, enhancing your reputation in the market and deepening customer relationships.

Experimentation Enablement

Enable your teams to experiment freely with AI without fear of data leaks - creativity without compromise.

Cost Savings

Prevent potential data breaches that could result in significant financial damage, legal fees, and remediation costs.

“The question isn't whether to use LLMs - it's whether you can afford to use them without guardrails. Every unprotected interaction is a risk waiting to materialize.

Secure Your AI Future with Strongly.AI

As AI continues to reshape the business landscape, the question isn't whether to use LLMs, but how to use them safely and effectively. Strongly.AI's LLM guardrails offer a comprehensive solution that addresses the complex security challenges of the AI age.

By implementing our advanced filtering system, you're not just protecting data - you're future-proofing your organization. You're creating an environment where innovation can flourish without compromising security.

Don't wait for a data breach to highlight the importance of LLM security. Proactive guardrails are not an overhead - they're an investment in trust, compliance, and competitive advantage. Take control of your AI interactions today.

Contact us at info@strongly.ai for a demo and see how we can tailor our solution to your unique security needs. Together, let's build a safer, more secure AI-powered future.

Ready to Protect Your AI Interactions?

See how Strongly.AI's guardrails keep your data safe while accessing the full power of LLMs.

Scope the First Engagement